MatCAM

MatCam (A Camera that Sees Materials) The proposed research program will realize the next step in camera evolution— the creation of the first material camera or MatCam that outputs a label of object material and its properties that can be used in any visual computing task. In the everyday real world there are a vast number of materials that are useful to discern including concrete, metal, plastic, velvet, satin, water layer on asphalt, carpet, tile, skin, hair, wood and marble. An in-hand device for identifying these materials has important implications in developing new algorithms and new technologies for a broad set of application domains. For example, a mobile robot can use MatCam to determine whether the terrain is grass, gravel, pavement, or snow in order to optimize mechanical control. The potential applications are limitless in areas such as robotics, digital architecture, human-computer interaction, intelligent vehicles and advanced manufacturing. Furthermore, material maps have foundational importance in nearly all vision algorithms including segmentation, feature matching, scene recognition, image-based rendering, context-based search, object recognition and motion estimation.

References

|

|

paper |

abstract |

bibtex |

code |

blog

We propose a Deep Texture Encoding Network (TEN) with a novel Encoding Layer integrated on top of convolutional layers, which ports the entire dictionary learning and encoding pipeline into a single model. Current methods build from distinct components, using standard encoders with separate off-the-shelf features such as such as SIFT descriptors or pre-trained CNN features for material recognition. Our new approach provides an end-to-end learning framework, where the inherent visual vocabularies are learned directly from the loss function. That is, the features, dictionaries and the encoding representation for the classifier are all learned simultaneously. The representation is orderless and therefore is particularly useful for material and texture recognition. This Encoding Layer generalizes robust residual encoders such as VLAD and Fisher Vectors, and has the property of discarding domain specific information which makes the learned convolutional features easier to transfer. Additionally, joint training using multiple datasets of varied sizes and class labels is supported resulting in increased recognition performance. The experimental results show superior performance as compared to state-of-the-art methods using gold-standard databases such as MINC-2500, Flicker Material Database, KTH-TIPS-2b, and a new ground terrain multiview database. The source code for the complete system are publicly available. |

|

paper |

abstract |

bibtex

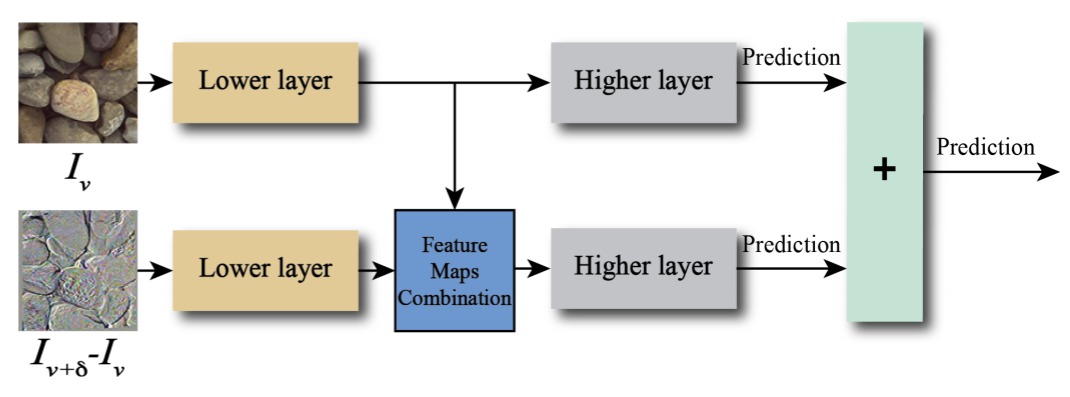

Material recognition for real-world outdoor surfaces has become increasingly important for computer vision to support its operation ``in the wild.'' Reflecting these needs, computational surface modeling that underlies material recognition has transitioned from reflectance modeling using in-lab controlled radiometric measurements to image-based representations based on internet-mined images of materials captured in the scene. We propose to take a middle-ground approach for material recognition that takes advantage of both rich radiometric cues and flexible image capture. We realize this by developing a framework for differential angular imaging, where small angular variations in image capture provide an enhanced appearance representation and significant recognition improvement. We build a large-scale material database, Ground Terrain in Outdoor Scenes (GTOS) database, geared towards real use for autonomous agents. The database consists of over 30,000 imaegs covering 40 classes of outdoor ground terrain under varying whether and lighting conditions. We develop a novel approach for material recognition called a Differential Angular Imaging Network (DAIN) to fully leverage this large dataset. With this novel network architecture, we extract characteristics of materials encoded in the angular and spatial gradients of their appearance. Our results show that DAIN achieves recognition performance that surpasses single view or coarsely quantized multiview images. These results demonstrate the effectiveness of differential angular imaging as a means for flexible, in-place material recognition. |

|

paper |

abstract |

bibtex |

code

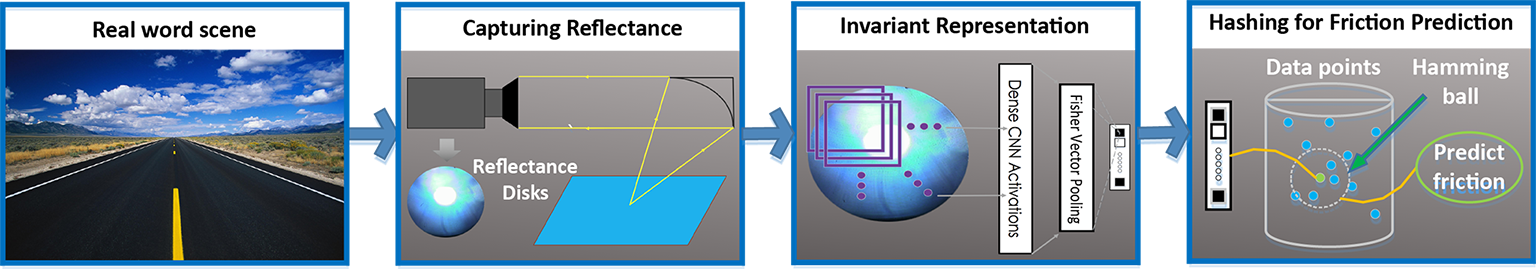

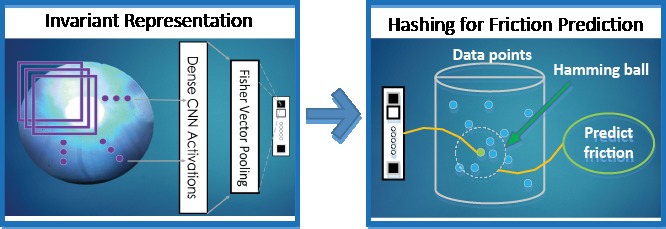

Images are the standard input for vision algorithms, but one-shot infield reflectance measurements are creating new opportunities for recognition and scene understanding. In this work, we address the question of what reflectance can reveal about materials in an efficient manner. We go beyond the question of recognition and labeling and ask the question: What intrinsic physical properties of the surface can be estimated using reflectance?We introduce a framework that enables prediction of actual friction values for surfaces using one-shot reflectance measurements. This work is a first of its kind vision-based friction estimation. We develop a novel representation for reflectance disks that capture partial BRDF measurements instantaneously. Our method of deep reflectance codes combines CNN features and fisher vector pooling with optimal binary embedding to create codes that have sufficient discriminatory power and have important properties of illumination and spatial invariance. The experimental results demonstrate that reflectance can play a new role in deciphering the underlying physical properties of real-world scenes. |

|

paper |

abstract |

bibtex

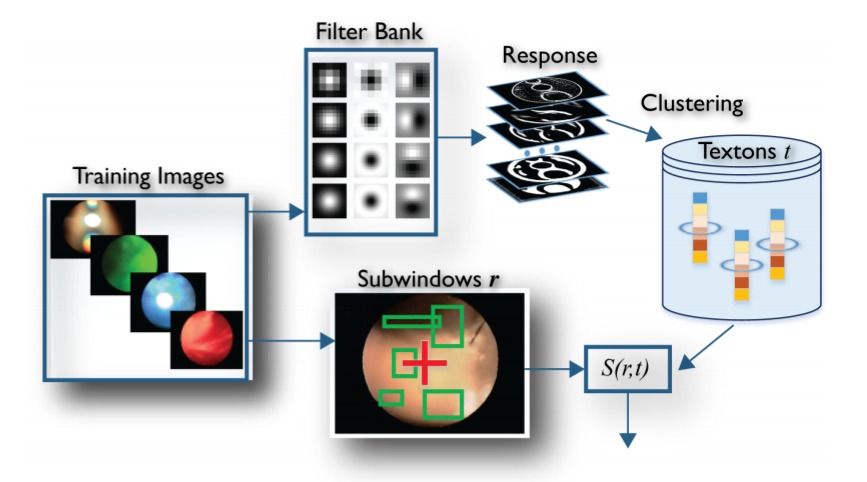

We introduce a novel method for using reflectance to identify materials. Reflectance offers a unique signature of the material but is challenging to measure and use for recognizing materials due to its high-dimensionality. In this work, one-shot reflectance of a material surface which we refer to as a reflectance disk is capturing using a unique optical camera. The pixel coordinates of these reflectance disks correspond to the surface viewing angles. The reflectance has class-specific stucture and angular gradients computed in this reflectance space reveal the material class. These reflectance disks encode discriminative information for efficient and accurate material recognition. We introduce a framework called reflectance hashing that models the reflectance disks with dictionary learning and binary hashing. We demonstrate the effectiveness of reflectance hashing for material recognition with a number of real-world materials. |

Software & Datasets

| Filename | Description | Size |

|---|---|---|

| Deep-Encoding-master.zip | Software toolkit: Torch Impementation of Encoding Layer (Paper 2017). Reproducting the experimental results of Deep-TEN. | 42K |

| Deep-Reflectance-Code-master.zip | Software toolkit: Reproducting the experimental results of DRC (ECCV 2016). Hashing for material recognition and friction estimation. Baseline approaches for image retrieval. | 214K |

| RF2016.zip | The package contains: Reflectance disks of 137 materials (5754 images). The measured coefficient of kinetic friction of each material sample. | 1.15G |

Created by Hang Zhang.